Microsoft OpenHack DevOps Paris

Microsoft OpenHack DevOps Paris

I had the pleasure of attending a 3 day Microsoft OpenHack in Paris, primarily based on Kubernetes and focusing on DevOps practices to achieve Zero Downtime deployments, this includes from the initial build of the container into Azure Container Registry to a successful release Building a complete CI/CD pipeline. This was a rather tense but exciting three days.

I worked in a team of six which included two colleagues Steven Nicholl and Rob Marks

This blog will be an overview what we created, in following blogs I will go into detail points of interest which will be mentioned in the below summary.

The context of the OpenHack Project

Your team is the IT team of a fictitious insurance company. The company is offering their customers the ability to evaluate their driving skills. A mobile application collects the data from the car and sends them to a set of APIs which evaluate the trip that has just been completed. Your customers can connect to a web application that uses the same APIs to review their trips and their driving score. Any downtime of the APIs would greatly impact your business.

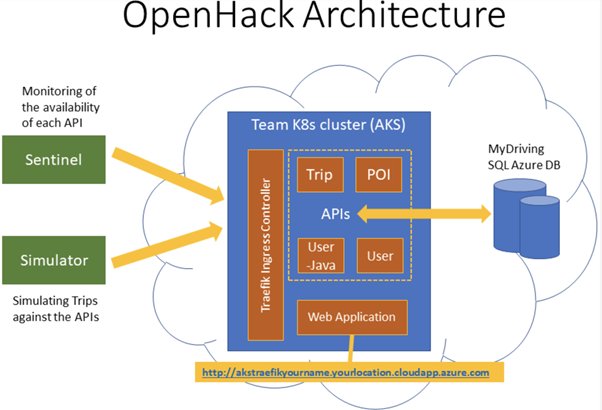

Architecture

The Kubernetes application is composed of :

- Web: The team website that your customers are using to review their driving scores and trips which are being simulated against the APIs.

- Trip: The trip API is where the mobile application sends the trip data from the OBD device to be stored.

- POI: The POI (Point Of Interests) API is collecting the points of the trip when a hard stop or hard acceleration was detected.

- UserProfile: The user API is used by the application to read the user's information.

- UserJava: The user (Java) API is used by the application to create and modify the users.

Sounds fun? Lets get deploying!

Day 1

Tasks completed:-

- Establish your plan

- Implement Continuous Integration (CI)

- Implement Unit Testing

- Implement Continuous Deployment CD

We decided to use Azure DevOps for our CI/CD pipeline along with Board for various tasks, communication consisted off Microsoft Teams if required to share information along with DevOps Wiki.

Branching strategy

We implementing a branching policy without our Azure DevOps repo, a policy helps to protect the teams code quality, changing of code and enforces standards that are required for a successful branch merge.

Our policy included:-

- Require a minimum number of reviewers

- Disable 'Allow users to approve their own changes'

- Checked for 'Linked Work Items' required

- Enforce a merge strategy

- Build Validation:- Each API had its own validation to complete

Improve code quality with branch policies

Link work items to support traceability and manage dependencies

Continuous Integration (CI) is a theoretic process of automating the build and testing of code rigorously to ensure the code is valid and no errors exist in the build. This is typical completed every time a commit has happened within the repo. By doing this, it promotes automated testing of code that has been merged into a release branch of the repo.

CI emerged as a best practice because software developers often work in isolation, and then they need to integrate their changes with the rest of the team's code base. Waiting days or weeks to integrate code creates many merge conflicts, hard to fix bugs, diverging code strategies, and duplicated efforts.

docs.microsoft.com

A more thorough explanation into Continuous Integration

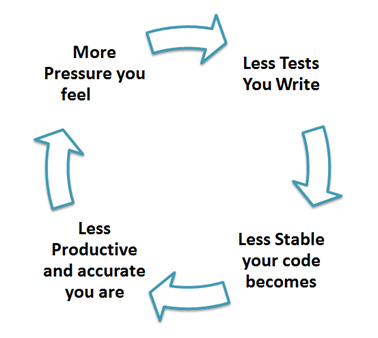

Do we need to unit test?

Yes you do!

Unit Testing, the practice of dissecting your code in your smaller manageable pieces and individually subjecting each snippet to a series of tests. These tests can vary from language to language but the initial concept stays the same. Most languages do have unit testing frameworks

The more often you test, the faster you can catch initial problems.

What did we implement?

- Options, epic creation

- Unit tests per each API

- Azure Devops Build logic if test failed

- Create an issue in Azure DevOps backlog if test failed

A successful Continuous Deployment..

Continuous Deployment (CD) the concept of which successful changes to code are automatically released without human intervention and minimal downtime if any. Using CD, it removes the concept of 'Release Day' moving over to a more automatic approach that releases a fully tested change into a successful build pipeline.

Day 1 finished with having a successful CI/CD pipeline in Azure DevOps that built the above work items using a branch/pull request policy that used to enforce workflow and gates between CI and CD activites.

Day 2

Tasks completed:-

- Implement a Blue/Green deployment strategy

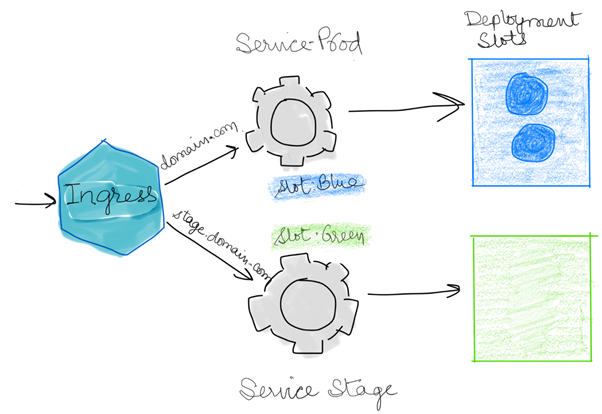

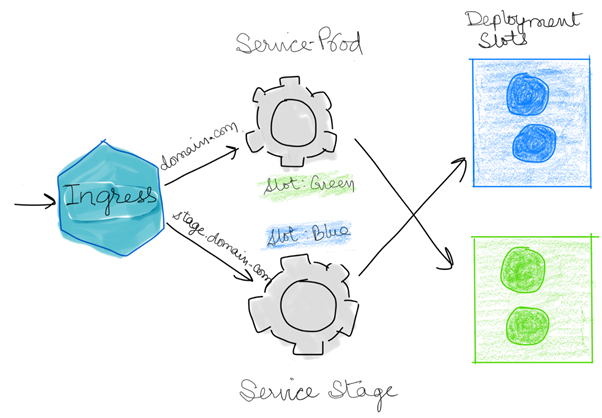

What is a Blue/Green deployment?

Using a Blue/Green deployment is way of achieving zero-downtime deployment or upgrade to an existing application.

In theory, the 'Blue' version is the Production running copy of the application and the 'Green' version is the newer version of the existing application. Once the green version is ready, the versions would be swapped and 'Green' would then be the Production environment with all traffic being routed to this version.

In Kubernetes it is slightly different, rather than it being two different applications it is two sets of containers. These containers act in the same way and once the new environment is ready, they are swapped over. In our Hack, we used Helm to achieve this

Blue/Green deployment strategy using Kubernetes

Blue/Green deployment strategy using Kubernetes

Blue/Green deployment using Helm Charts

What did we configure?

A successful blue/green deployment downtime that consists of zero downtime deployments when required:-

- When a new build has been successfully created it tags the build and initiates a release

- This initiates a pipeline release

Pipeline release consisting off:-

- Helm Install

- AzureCLI to determine which set of pods are currently Production (Blue or Green?) saves this to an environment variable

- Deploys the new build to the staging Pods depending on the colour output from above

- Smoke tests the Staging Test page to ensure a successful 200 response, if not STDERR and release stops.

- If successful smoke test, switch Staging to Production slot (so, blue->green swap or vis-versa)

- Another smoke test, now on the Production URL

- If failure, revert back to original pods

Configure your release pipeline for safe deployments

Production Slot now swapped Blue -> Green

Day 3

The final day of the OpenHack, tasks completed:-

- Implement a monitoring solution for your MyDriving APIs

- Implement Integration testing, code coverage and Load testing

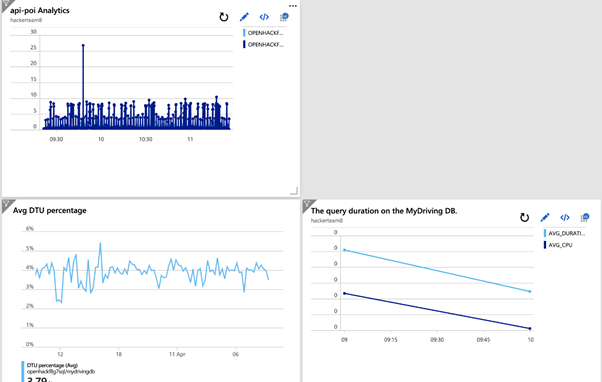

We had to define and implementing a monitoring strategy, with the criteria:-

- Cluster monitoring that display the CPU Usage of each node.

- The average response time over a period of 10 seconds for the 2 of the 4 microservices

- The query duration on the MyDriving DB.

- The percentage of DTU utilized on the SQL Server.

Lets get deploying.. we implemented a centralised monitoring dashboard utilizing Azure Monitor, Log Analytics with Azure SQL Analytics

Create and share custom dashboards in Azure Portal

Azure custom dashboard

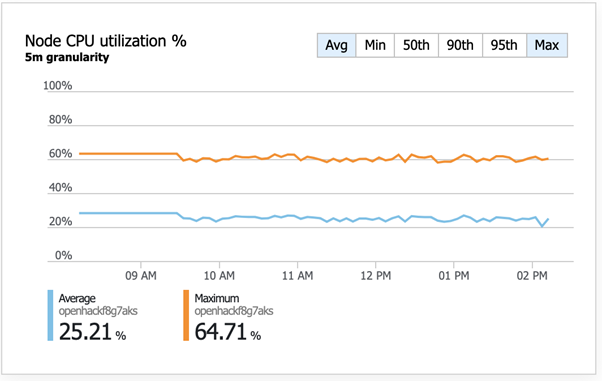

Next, we used Azure monitor for containers, which has now got an update that doesn't directly reside within Log Analytics, find out further

Snippet of Azure monitor for containers

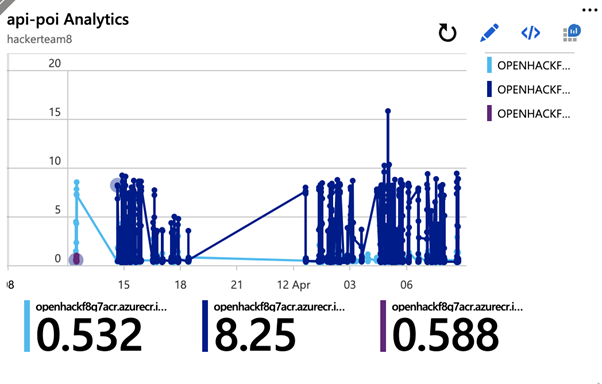

For us to complete task 'The average response time over a period of 10 seconds for the 2 of the 4 microservices' we had to write custom querying using Kusto (Azure query data language)

The below dashboard created querying three one of the micro services.

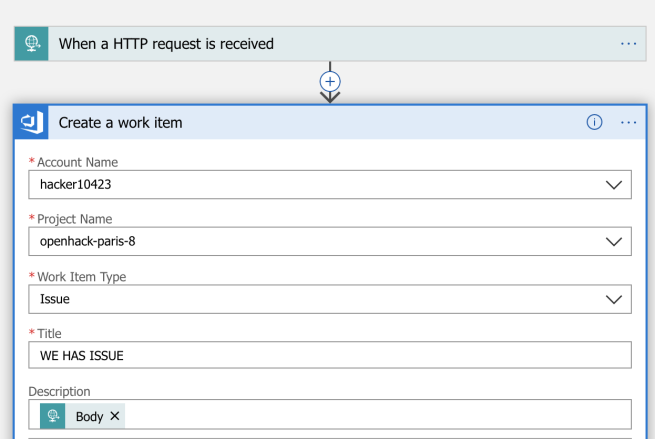

To finish, we included automatic issue logging to Azure DevOps work items on the above, using a Logic App. This takes the alert body and adds the body to the new automatic issue as per below

Summary

The end of a great event, certainly any future OpenHacks I recommend you to attend; you get a solid mixture of tasks with the first couple starting at the beginning of a CI/CD pipeline and building a foundation for your team, getting to know each other, chat and discuss the strategy and what communication/source repo software etc you will be using.

Moving on, as the tasks progress they are becoming increasingly harder, challenging and complexity is increased throughout. These challenges will teach you how to implement advanced CI/CD deployment scenarios including the ability to create Blue/Green deployments.

I think having an experienced team in both software development and DevOps/infrastructure will give you the best outcome to completing a successful OpenHack like this. Additionally to this, you have an assigned Microsoft proctor per team who you can use to bounce ideas off and sometimes if required, a little assistance.

Moving forward, I will be correlating any new learnings and look at adding these to current and future projects. I look forward to the next Microsoft Event